Prerender Javascript Frontends

If you have spent a bit of time building Javascript apps with React or Vue, I'm sure you've heard of server-side rendering (SSR) and static site generation (static sites). Both of these have their place in the Javascript ecosystem with the main objective of delivering complete HTML to the client (rather than having the client render the HTML after the Javascript bundle has loaded.

Where SSR fails and static sites win

The problem with SSR, in my opinion, is that it's taken us back to having servers do rendering (which adds a layer of infrastructure complexity) whilst also adding another layer to your application. This means both the server and client app need to worry about state and routing.

This is why static sites have become so popular. Render your HTML during build time and upload it to a static host (Amplify Console, Netlify, Vercel, Gatsby Cloud etc.) and let them worry about serving it. This had lead to blazing-fast sites whilst still giving you the ability to create dynamic apps. If you're interested in learning more, look at Gatsby and Next (which this site is built on).

Where static sites fail

However, there is a tipping point where static sites start falling over during build time and this is when there is a lot of pages to generate. This is also true when that content update regularly.

Sidenote: The team at Gatsby are working on incremental builds which could solve this.

Another option: Prerendering

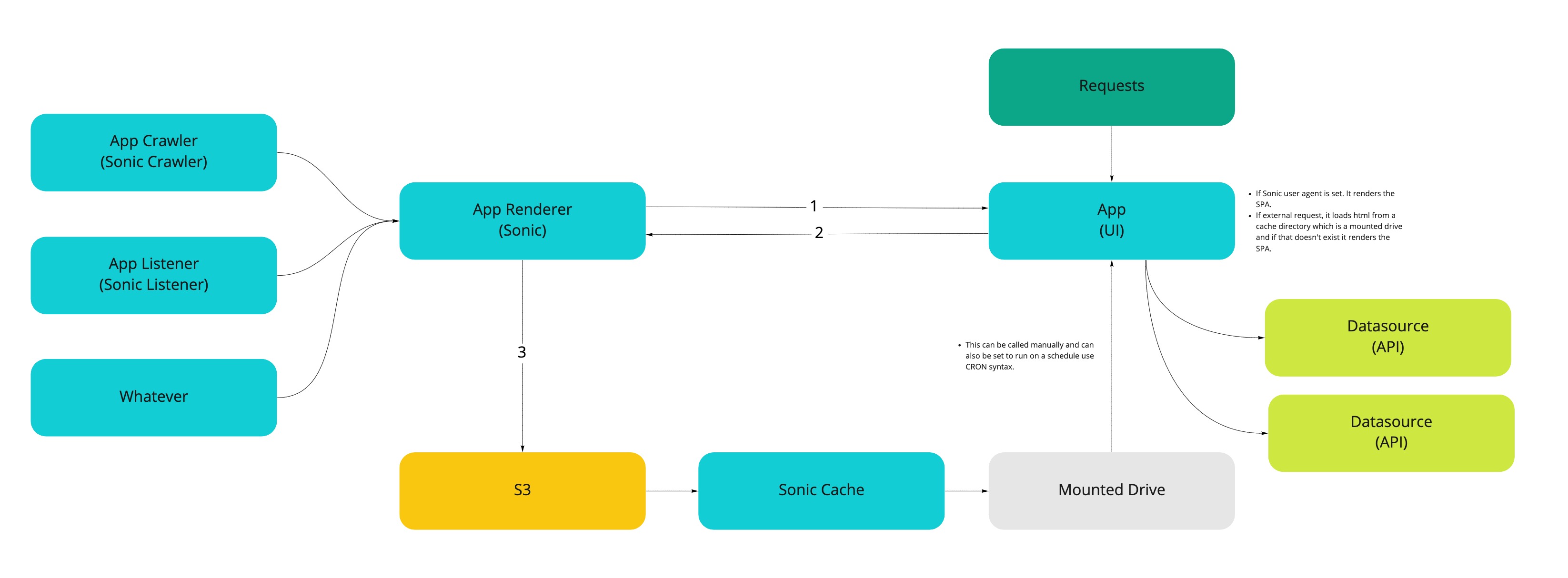

Instead of statically generating a site during build time and having to manage a server-rendered Javascript app. We've built an asynchronous prerender dubbed Sonic which fetches a JS app and waits for it to completely load before returning the HTML. This, coupled with a crawler and an S3 bucket, allows us to serve HTML directly to the client which is up-to-date. This gives us the following options:

- Crawl manually

- Crawl on application updates (code changes)

- Crawl on data source changes

- Crawl on a schedule (useful if you have no ability to listen for code/data changes)

Essentially this is static site generation as the service generates the HTML for you. However, it completely framework agnostic and does not alter our build time at all. This gives our teams complete autonomy on how they build our apps whilst still delivering on user experience and our SEO requirements.

This is how a basic app would look:

We use S3 as an underlying storage layer with "subfolders" for each environment and application. We can then mount these into the app's container serving HTML directly from S3 (the latency is amazing low).

Comments ()